Are you finding that your current quality monitoring program is having a positive impact on your agents, customers and call center? If you are like many call centers, quality scores are high but customer satisfaction is not. Quality scores range in the 90th percentile, yet when management randomly listens to calls, it is not uncommon for them to observe below-average customer service and incorrect information provided to customers. Do your frontline agents believe the quality process is punitive? Do they resist applying feedback provided by quality coaches and, as a result, their performance is not improving, and your efforts in quality monitoring are wasted? Sadly, these scenarios are not uncommon in most call centers and, as a result, the work done in quality departments is not highly regarded by management, frontline agents or the call center as a whole. In this article, we will focus on one underlying principle that is sabotaging the success of your quality monitoring (QM) program.

Quality Standards are Too Subjective. This is by far the most common complaint made of QM and is the most damaging to the integrity of quality programs. When the frontline staff perceive quality as subjective, they are inclined to use this as an excuse for not take their quality scores seriously, and they are inclined not to buy-in to feedback from their call coaching sessions. I think we would all agree that if quality monitoring does not bring about agent improvement, then what’s the point? In addition, subjective standards leave performance open to interpretation resulting in inconsistent service to customers. In the end, you have a group of agents all doing their best (we hope!) but going about it in different ways. And you have quality analysts and supervisors all doing their best (again, we hope!) to evaluate calls and provide feedback for improvement, but they are all relying on their own unique understanding of quality expectations, resulting in a number of variations of how calls should be handled and processes followed. Therefore, coming up with OBJECTIVE quality standards must be the number one focus when developing quality evaluation forms.

So, how do you do this? It’s all about semantics, the words we select to identify the performance expectations. It comes down to identifying behavior-based quality standards that are specific and concrete. The two “tests” for behavior-based standards are as follows: Are they observable? And are they coachable? Let’s take the first one: observable. Something is observable when it can be seen (and in the case of call monitoring, heard) and measured. If a group of quality analysts are listening to a call and hear the agent say, “May I have your name and account number,” we could all confirm this step in the verification process took place (observation). If “verifies caller’s identity” is worth 10 points on the quality form, we would be able to measure it, report on it and so on. This is an example of an observable, behavior-based standard.

The second criteria for determining whether or not a standard is behavior-based is to test the coachability. In other words, can you demonstrate it? Let’s say one of your quality standards is “responds in a caring manner.” Just what is caring? Some believe agents need to apologize to meet this end. Others might say there is no need to apologize unless a mistake was actually made and that caring is more about an agent’s reaction to a caller’s concern, but how specifically are they supposed to act when a caller is upset? And still others might interpret caring as being something in the tone of the agent’s voice. There is really no right or wrong in these three versions, they just expose the fact there are many different ways to show you care, some probably better than others. But if an agents gets marked off for not “caring” because they didn’t perform the way preferred by the person evaluating the call, this is a problem. As a quality analyst or supervisor, am I able to demonstrate how to “respond in a caring manner?” And would my demonstrated example be consistent with my peers’ demonstrations who are also out there coaching other frontline agents how best to do this? You can see that subjective quality standards cause a ripple effect of inconsistency in performance and frustration for the frontline agents because the performance expectation is unclear, ambiguous, and open to individual interpretation.

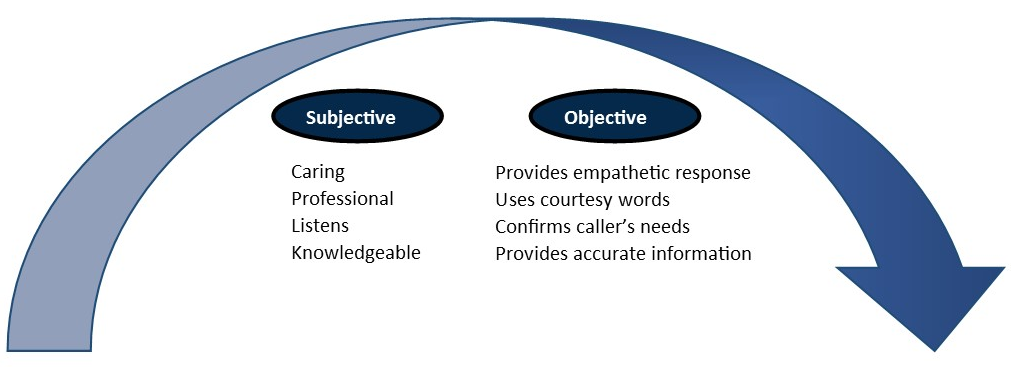

The illustration below provides additional examples of subjective terminology often used by call centers on their quality monitoring form, along with examples of objective, behavior-based alternatives.

By now you are probably beginning to think how observable and coachable applies to YOUR quality performance standards. If you can only talk about a quality standard and are not able to actually demonstrate it, then you know that standard needs to be improved and made into a more objective, behavior-based one. Rather than “responds in caring manner,” how about “provides empathic responses.” But we can’t stop here, it’s still not enough direction because many agents and call evaluators are not experienced and knowledgeable on the art of empathetic responses. You must provide an additional level of detail in your comprehensive Quality Standards Definitions (QSDs).

setting. This is not to be confused with scripting call responses because it’s different. In your QSDs, you are simply providing concrete examples that your agents can relate to and model as they learn and build their own repertoire of effective call handling behaviors.

The last item to be included in your QSDs is the supporting call center and/or company goal represented by that quality standard. Your call center and company goals provide the foundation for your quality program and should be referred to not only in your QSDs but also your call coaching sessions as you discuss the impact of agent performance on the company and customer.

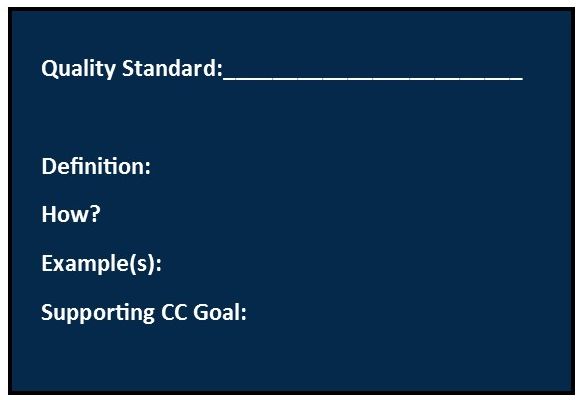

Below is a sample definition for a simple, yet critical quality standard.

How: Ask caller for account number and compare account number with CIS data field. For second level of security, verify customer address and phone number. If caller is unable to provide account number, request Level 2 Security Check (see Verification Procedures in CC Process Manual). If address/phone different from CIS, update CIS before proceeding with call.

Example: “For security purposes, may I please have your account number?”

Not acceptable: “Can I have your account number?”

Supporting Goal: To protect our customer’s account privacy and maintain regulatory compliance so that we do not put the company at risk. Also, as we maintain relationships with our customers, the accuracy of their contact information is essential so we can reach them when future communication is desired for billing or marketing purposes.[/titled_box]

Revamping YOUR Quality Evaluation Program. We’ve just scratched the surface on ways to improve your call center quality evaluation form by 1). Improving your standards so they will be more objective and behavior-based and 2). Creating detailed QSDs for each quality standard to clarify performance expectations and ensure consistency with your call evaluators and coaches. There are many more things you can do to revitalize your quality program so we’ve put together a 7-Part QUALITY Series to help your quality specialists, supervisors and even call center managers better leverage quality monitoring and call coaching to impact your call center business. We hope you will join us for some FAQ (Friday Afternoon Quality) starting the first week of March!

|

|

By Deelee Freeman, Call Center Training Associates, Inc.

Deelee Freeman is the Director of Call Center Training Associates, providing training and consulting services for call centers. She can be reached at 404-630-2156 or dfreeman@callcentertrainingassociates.com

Copyright ©2015 Call Center Training Associates, Inc.